Why Process Beats Raw Capability: What Andrew Ng’s Agentic Workflows Mean for Your Operations

Here’s a number that should reframe how you think about AI spending.

Andrew Ng — co-founder of Google Brain, former chief scientist at Baidu, the guy who’s been teaching machine learning to millions through Stanford and DeepLearning.AI — has been presenting a data point that most of the AI hype machine is quietly ignoring. When his team analyzed results from multiple research groups on the HumanEval coding benchmark, they found this:

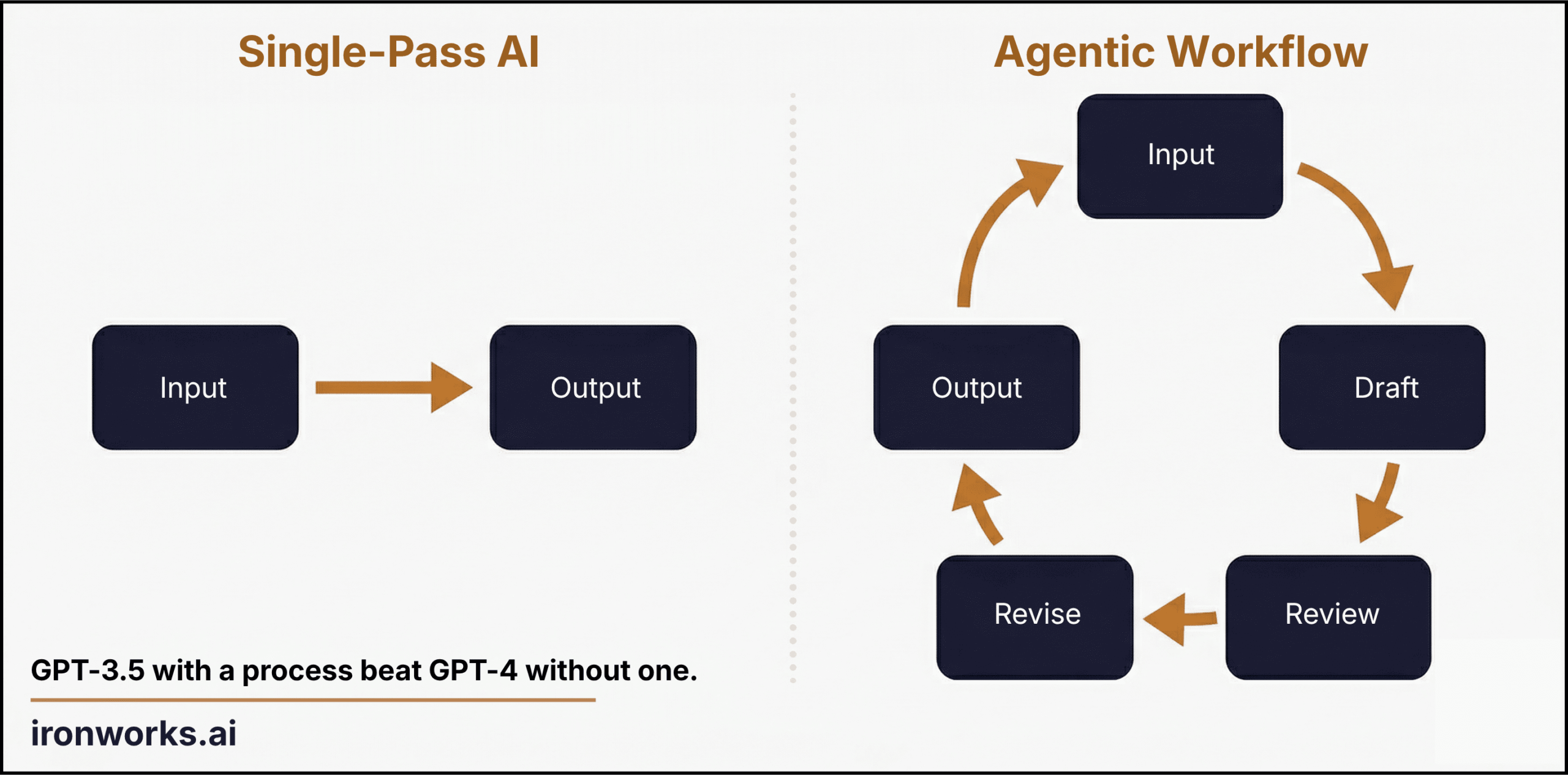

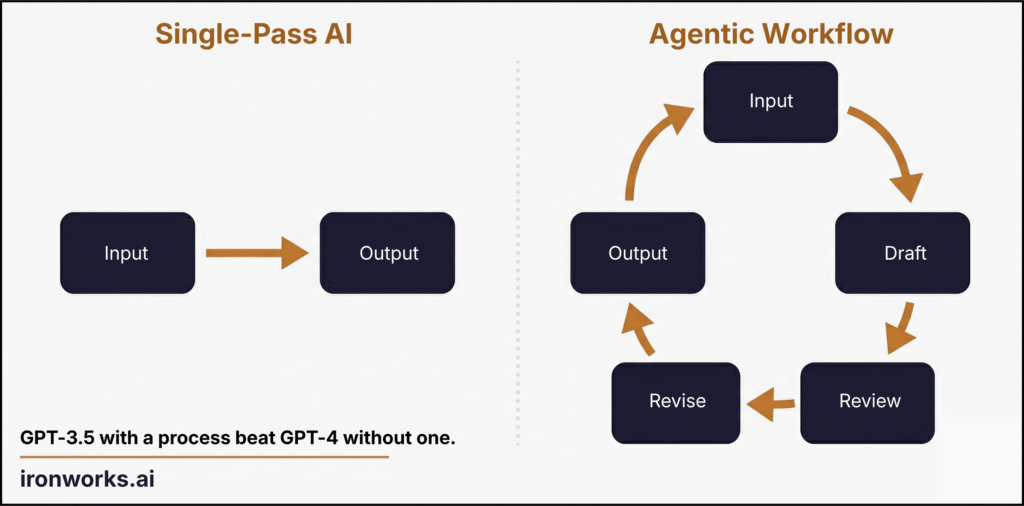

GPT-3.5, the older and cheaper model, wrapped in an agentic workflow — a system where the AI drafts, reviews its own work, identifies errors, and revises before producing a final output — scored 95.1%.

GPT-4, the newer and significantly more expensive model, used in a single pass — you give it the prompt, it gives you an answer, done — scored 67%.

Let me say that differently. The cheap model with a good process demolished the expensive model without one.

If you’ve spent any time running a business, that result shouldn’t surprise you even slightly. It’s the same thing every operator learns the hard way: process beats raw capability. Every time. A brilliant employee with no system underperforms a competent employee inside a good one.

What’s interesting is that this is the first time we’ve got hard data proving it works the same way with AI.

What “Agentic” Actually Means (Without the Jargon)

The AI industry has turned “agentic” into a buzzword, which is unfortunate, because the underlying idea is dead simple and genuinely useful.

An agentic workflow is just a loop. That’s it. Instead of asking AI to do something once and hoping for the best — which is what most people do when they type a prompt into ChatGPT — you build a system where the AI:

- Drafts an initial output

- Reviews that output against a set of rules or criteria

- Identifies what’s wrong or missing

- Revises the output

- Checks again

- Repeats steps 3-5 until the output meets the standard

- Produces the final result

That’s not science fiction. That’s how you’d train a good junior employee. “Write a first draft. Check it against the template. Fix what doesn’t match. Have someone review it. Revise. Submit.”

The only difference is the junior employee does this in four hours and occasionally forgets step three. The agentic workflow does it in four minutes and never skips a step.

Ng breaks agentic workflows into four design patterns, and they’re worth understanding because they map directly to how real businesses operate:

Reflection: The AI reviews its own output and identifies weaknesses. Think of it as a built-in quality check. Instead of producing one draft and calling it done, the system critiques itself and revises. This is the pattern that drove the 95.1% benchmark result — the same model, iterating on its own work, producing dramatically better output than a single attempt.

Tool Use: The AI can call external tools — pull data from a database, run a calculation, search for current information — as part of its workflow. This is how you get AI that doesn’t just generate text but actually does work: looking up a client’s history, checking pricing against a rate card, verifying that a date falls within the contract window.

Planning: The AI breaks a complex task into subtasks before executing them. Instead of trying to write an entire proposal in one shot, it outlines the sections, identifies what information it needs for each, gathers that information, then writes each section sequentially.

Multi-Agent Collaboration: Multiple AI “agents” work together, each with a different role. One drafts, another reviews for compliance, a third checks the numbers. This mirrors how a real team operates — you wouldn’t have the same person write the proposal and approve the budget.

None of this is theoretical. These patterns are running in production at companies right now. The question isn’t whether they work. It’s whether they’ll get applied to the businesses that need them most.

Why This Should Matter to a 30-Person Company

Let me paint a picture that’ll be familiar to anyone running an operation.

Note: This is a hypothetical composite — not a specific client. But the numbers are drawn from industry benchmarks and my own experience running a $12M/year professional services firm.

Take a 30-person company. Could be professional services, environmental consulting, a staffing agency, a marketing firm — the industry almost doesn’t matter. What matters is that this company has a handful of processes that eat time and create friction every single week.

Quarterly reporting. Every quarter, your team compiles client reports. Right now, it takes 3 people the better part of a week. Someone pulls data from three different systems. Someone else formats it into the client template. A senior person reviews each one for accuracy, catches errors about 30% of the time on the first pass, and sends it back. Reports go out late more often than not — and when they’re late, clients notice.

An agentic workflow handles this differently. The system pulls data from your connected sources, populates the report template, checks figures against previous quarters for anomalies, flags anything that looks off for a human to review, and generates a draft that’s 85% ready for final sign-off. A week of work across three people becomes a day and a half for one person. Reports go out on time. Every quarter.

Proposals and SOWs. Your senior people — the ones billing at $175-$250/hour — are spending 6-8 hours on each proposal draft. They’re pulling language from previous proposals, customizing scope sections, adjusting pricing, formatting, reviewing, and revising. Most of this work follows a pattern. About 60-70% of every proposal is the same structure with different specifics.

An agentic workflow drafts the proposal from a template, populates it with client-specific information, checks pricing against your rate card, reviews the scope against similar past projects for consistency, flags anything that deviates from your norms, and produces a draft that’s 80% ready for senior review. Your senior person goes from spending 6 hours creating to spending 90 minutes reviewing and refining. That’s not laziness. That’s the move.

Client onboarding. New client starts. Someone creates the project in your PM tool. Someone else sets up the shared drive. Another person sends the welcome email with the portal login. Someone populates the initial task list. Someone else schedules the kickoff call. Five people, twelve steps, three days, and somebody always forgets the NDA.

An agentic workflow executes the entire checklist: creates the project, populates tasks from a template based on the service type, generates and sends the welcome packet, schedules the kickoff, and produces a status report of what’s been completed and what needs human attention. The NDA doesn’t get forgotten because the system doesn’t forget things.

The Math That Matters

Here’s where I’m going to put numbers on this, because “it saves time” means nothing without a dollar sign attached.

Take that same hypothetical 30-person company. Let’s say they’re doing $8M in annual revenue with a 20% net margin — so $1.6M in actual profit. That’s a healthy but not extravagant business.

Across those three processes alone — intake, proposals, and onboarding — the team is collectively burning roughly 35 hours per week in process overhead. That’s manual routing, drafting from scratch, copy-pasting between systems, chasing approvals, and fixing the things that fall through the cracks.

35 hours per week at a blended loaded cost of $65/hour (salary plus benefits plus overhead for the mix of senior and junior people touching these processes) equals $118,300 per year.

If agentic workflows cut that overhead by 70% — which is conservative based on Ng’s benchmark data and the pattern of results I’ve seen in process automation — you’re recovering roughly 26 hours per week. That’s $87,880 annually in reclaimed capacity.

Round it. Call it $120K when you factor in the error reduction, the faster client response times, and the senior staff hours redirected to billable work instead of administrative overhead.

$120K annually for a company with $1.6M in profit is 7.5% of the bottom line. That’s not marginal. For context, most companies would celebrate a new client worth $120K in annual revenue. This is the equivalent, except there’s no acquisition cost, no service delivery, and no client management. It’s pure operational recovery.

And here’s the part that should keep you up at night: your competitors are going to figure this out. Maybe not this quarter. Maybe not this year. But the firms that build these loops into their operations will structurally operate at a lower cost basis than the ones that don’t. That gap compounds.

What NOT to Do

I should be honest about this part, because the temptation after reading something like this is to go try to make everything agentic by next Tuesday. Don’t.

The companies that waste money on AI aren’t the ones that refuse to adopt it. They’re the ones that try to adopt it everywhere simultaneously with no process discipline. I’ve watched this happen with CRM implementations, ERP rollouts, and every other enterprise technology wave for 15 years. The pattern is always the same: buy the tool, try to apply it to everything, get mediocre results everywhere, declare that it “doesn’t work for our business.”

Here’s what actually works:

Pick one process. One. The one that’s most repetitive, most documented, and most annoying to your team. Not the most complex one — the most boring one. Boring means predictable, and predictable means a loop can handle it.

Map it before you build anything. Write down every step. Every decision point. Every exception. Every “well, it depends” moment. If you can’t describe the process on paper, you definitely can’t encode it into a workflow. This step takes longer than the actual AI implementation. It’s also the step that creates 80% of the value, because most companies have never actually documented how they do things — they just do them.

Build the loop small. Draft, check, revise, output. Start with that. Don’t try to bolt on tool use, multi-agent collaboration, and planning all at once. Get the basic loop working on one process, measure the results, then expand.

Keep a human in the loop — initially. The goal isn’t to remove humans from the process. It’s to change what humans spend their time on. Instead of drafting, they’re reviewing. Instead of routing, they’re handling exceptions. Instead of doing the work, they’re quality-checking the work. Over time, as the system proves reliable, you can widen the autonomy. But starting fully automated is how you get expensive mistakes and erode trust with your team.

Measure something real. Hours reclaimed per week. Error rates before and after. Time-to-completion on the process. Client response time. Revenue per employee. Pick metrics that tie to money, not “engagement” or “satisfaction.” You’re making a business case, not writing a mission statement.

The Operator’s Observation

I keep coming back to Ng’s benchmark data because it tells a story that extends well beyond AI.

GPT-3.5 is not a better model than GPT-4. It’s a cheaper, less capable model. But paired with a thoughtful process — a loop that includes drafting, self-review, error correction, and iteration — it didn’t just match GPT-4. It destroyed it. 95.1% versus 67%. That’s a 28-point gap in favor of the system with better process design.

Every operator I know has lived some version of this. The new hire who follows the playbook outperforms the veteran who wings it. The average team with great systems beats the talented team with bad ones. The mid-tier vendor with a solid implementation plan beats the premium vendor who tosses you the login credentials and says “good luck.”

Process beats capability. That’s not new. What’s new is that for the first time, you can encode process into software that actually follows it — consistently, at 2 AM, without needing a reminder.

The technology is genuinely ready. It’s been ready. The bottleneck was never the AI. The bottleneck is someone sitting down and doing the unglamorous work of documenting how their business actually operates, then building a loop around it.

That’s not a pitch. That’s arithmetic.