What the BCG AI Study Actually Means for a Services Firm

In September 2023, a team of researchers from Harvard, Wharton, and the Boston Consulting Group ran a field experiment that most people read wrong.

They took 758 BCG consultants — not students, not lab subjects, real consultants doing real work — and randomly assigned them to either use GPT-4 on a set of 18 realistic consulting tasks, or work without it. Then they measured everything.

The headline numbers are impressive enough that they’ve been bouncing around LinkedIn and conference keynotes ever since:

- 12.2% more tasks completed

- 25.1% faster

- Over 40% higher quality output

And that’s where most people stop reading. “AI makes knowledge workers more productive.” Great. Put it on a slide. Move on.

But there’s a number buried in this study that should fundamentally change how you think about running a services firm. And almost nobody’s talking about it.

The Number That Actually Matters

The bottom-performing consultants — the ones in the lower half of the pre-study baseline — improved by 43%.

Forty-three percent.

The top performers improved too, but significantly less. The exact gap varies depending on which metric you’re looking at, but the pattern was consistent across the board: AI compressed the performance distribution. The distance between your best people and your average people got dramatically smaller.

If you run a 40-person professional services firm, read that again. Not as a technology insight. As an economics insight.

What Everyone Else Sees vs. What an Operator Sees

The LinkedIn consensus on this study goes something like: “AI boosts productivity! You should adopt AI tools! The future is now!”

Okay. But that’s not actually useful if you’re the person signing the checks.

Here’s what I see when I look at this data, as someone who built and ran a $12M/year professional services firm:

The bench economics just changed. Every services firm has a performance bell curve. You’ve got your top 20% who deliver outsized value, your solid middle 50% who do reliable work, and your bottom 30% who are competent but require more oversight, produce more revisions, and generally cost more per unit of output than you’d like. That bottom tier isn’t bad — they’re learning, or they’re in the wrong seat, or they just haven’t hit their stride yet. But they’re expensive relative to their output.

If AI closes that performance gap by even half of what this study showed, you’ve just changed the economics of your entire bench.

Your training costs drop. The traditional model is: hire smart people, spend 6-18 months getting them up to speed, absorb the cost of their mistakes during that period, hope they don’t leave after year two. If AI can compress that ramp — not eliminate it, compress it — you recoup the investment faster and reduce the window where junior people are underwater on their cost.

Your hiring math changes. If your B-players can now produce B+ or A- work with AI assistance, the argument for hiring exclusively A-players at A-player salaries gets weaker. You might be better off hiring motivated, coachable people at a lower cost basis and pairing them with good AI workflows. That’s a different recruiting strategy than most services firms are running.

Running the Numbers on a Hypothetical 40-Person Firm

I want to be clear: what follows is a hypothetical scenario, not a case study. I’m applying the study’s findings to a realistic services firm to show what the math looks like. These are estimates, not guarantees.

The setup. A 40-person professional services firm — could be consulting, accounting, marketing, engineering, legal support, whatever. Average blended billing rate: $150/hour. The team bills roughly 1,600 hours per person per year (accounting for admin time, PTO, non-billable work). That’s 64,000 total billable hours across the firm, generating roughly $9.6M in annual revenue.

The speed gain. The study showed a 25.1% increase in task completion speed. No firm is going to capture all of that — some of the speed gain goes to quality checks, some gets absorbed by the uneven nature of real client work, some tasks don’t lend themselves to AI assistance. Let’s say you capture half: a 12.5% effective speed increase on deliverable work.

Across 40 people, 12.5% of 64,000 hours is 8,000 hours. But not every task is AI-eligible. Let’s say 50% of deliverable work can benefit from AI assistance (the study used specific task types, and some work — client relationships, novel strategy, judgment calls — won’t benefit). That’s 4,000 hours. Cut it in half again for real-world friction — adoption isn’t uniform, some people resist, some workflows don’t adapt cleanly. That’s 2,000 hours of recovered capacity.

At $150/hour, that’s $300,000 in capacity you didn’t hire for. Not revenue — capacity. You can choose to bill it (more revenue), reallocate it to business development, or reduce overtime. But it’s real, and it didn’t require adding headcount.

The quality gain. This is where the math gets harder to pin down but the impact is arguably bigger. The study showed 40% higher quality output. In a services firm, quality shows up in a very specific way: revision cycles.

I can speak to this from experience. When I was running a $12M firm, revision cycles were the silent killer of profitability. A junior person drafts a deliverable. A senior person reviews it, marks it up, sends it back. The junior person revises. The senior person reviews again. Sometimes a third round. Sometimes a fourth because the client changed the scope mid-stream and nobody caught it.

Every revision cycle burns hours at two billing rates — the junior person redoing the work and the senior person reviewing it again. Nobody tracks this. Nobody has a line item for “hours spent fixing work that should’ve been right the first time.” But everyone in a services firm feels it. It’s the reason senior people are always overbooked and junior people always seem to be spinning.

If AI-assisted first drafts come in at meaningfully higher quality — fewer factual errors, better structure, more complete analysis — the revision cycle compresses. Maybe you go from an average of 2.5 revision rounds to 1.5. On a team of 40, that’s hundreds of senior hours freed up annually. Senior hours that were being spent on quality control instead of the high-judgment work that actually justifies their billing rate.

I can’t put a precise dollar figure on that because it depends on your revision frequency, your billing structure, and a dozen other variables. But if you’ve ever looked at your utilization reports and wondered why your most expensive people are spending 30% of their time reviewing junior work, this is the mechanism that changes that ratio.

The bottom-performer uplift. Here’s where it gets genuinely interesting for hiring and HR. If your bottom 30% improve by even half of the 43% the study showed — call it a 20% real-world improvement — you’ve just made your worst hiring decisions significantly less expensive.

That person you hired eight months ago who’s competent but slow? They just got meaningfully faster and their output quality went up. The new hire who’s still learning your firm’s approach to deliverables? AI can encode your best practices into their workflow so they’re producing work that looks like it came from someone with three years of experience instead of eight months.

This doesn’t mean you can hire anyone off the street and hand them a ChatGPT login. It means the gap between “competent and willing to learn” and “experienced and polished” just got narrower. And in a labor market where experienced professionals are expensive and scarce, that matters.

What This Does NOT Mean

The study has a second finding that’s just as important as the first, and it gets conveniently ignored by everyone trying to sell you AI tools.

The researchers deliberately included a task that fell outside AI’s capability boundary — what they call the “jagged technological frontier.” It was a task that looked similar to the others on the surface but required a different kind of reasoning.

On that task, consultants working without AI got it right 84% of the time. Consultants using AI? They dropped to 60-70% accuracy.

Read that again. AI made them worse. Not a little worse. Meaningfully worse. On a task that seemed like it should be in AI’s wheelhouse.

The researchers describe it as “falling asleep at the wheel.” When consultants trusted AI on tasks where AI was actually wrong, they stopped applying their own judgment. They deferred to a confident-sounding but incorrect output. The better the AI sounded, the less likely people were to catch the error.

This is the “jagged” part of the jagged frontier. AI’s capability boundary isn’t a clean line. It’s irregular, unpredictable, and the failure mode is subtle — the AI doesn’t say “I don’t know.” It gives you a polished, confident, wrong answer. And the more you’ve been trained to trust it by its successes on other tasks, the more likely you are to accept it.

For a services firm, this means:

You can’t deploy AI without guardrails. Every AI-assisted workflow needs a quality check — not a rubber stamp, an actual review by someone who knows what right looks like. The study shows that blind trust in AI output actively degrades quality on certain tasks.

Not every task is AI-eligible. The “jagged frontier” means you need to map which tasks in your firm actually benefit from AI and which ones don’t. This isn’t a one-time exercise. As models improve, the frontier shifts. What was outside the boundary six months ago might be inside it now, and vice versa.

The biggest risk is confidence, not capability. The AI won’t tell you it’s wrong. Your team won’t catch it if they’ve been conditioned to trust the output. The most dangerous scenario isn’t “AI can’t do this task.” It’s “AI can do 17 of these 18 tasks perfectly, and nobody notices when it gets the 18th one wrong.”

What a Firm Owner Would Actually Do With This

If I were running a 40-person services firm today — and I’m drawing on what I learned running a $12M one — here’s what I’d do with this research. Not in theory. In practice, on a Monday morning.

Week 1: Pick one deliverable type and test it. Not your most important deliverable. Not your most complex one. Pick the one your team does most frequently — the bread-and-butter output that accounts for the highest volume of billable hours. For a consulting firm, that might be the initial analysis memo. For an accounting firm, it might be the standard engagement letter. For a marketing agency, it might be the first-draft campaign brief.

Take five of those deliverables that were recently completed by hand. Feed the inputs into an AI tool and have it produce a first draft. Compare the AI output to what your team produced. Don’t judge it on polish — judge it on completeness, accuracy, and how much editing it would need to get to “ready for client review.”

You’ll know in a week whether this specific deliverable type is inside or outside the frontier for your firm.

Week 2-3: Build the workflow for the ones that work. For the deliverables where AI produced a usable first draft, build an actual workflow. Not “tell everyone to use ChatGPT.” A documented process: here’s the input template, here are the prompts, here’s the quality checklist, here’s what the reviewer should look for. Make it repeatable. Make it consistent.

Week 4: Measure. Track the hours. Before AI, Deliverable X took Person Y an average of Z hours. After the AI workflow, it takes Z-minus-something hours. Track the revision cycles. Track the senior review time. Don’t guess. Measure.

Ongoing: Map the frontier. Keep testing new deliverable types. Some will work. Some won’t. The study tells you that the boundary is jagged and unpredictable, so you can’t assume a task is AI-eligible just because a similar-looking task was. Test each one. Build the map for your specific firm.

This isn’t a six-month transformation initiative. It’s a four-week pilot that costs almost nothing and gives you real data on whether this research applies to your specific work.

The Actual Insight

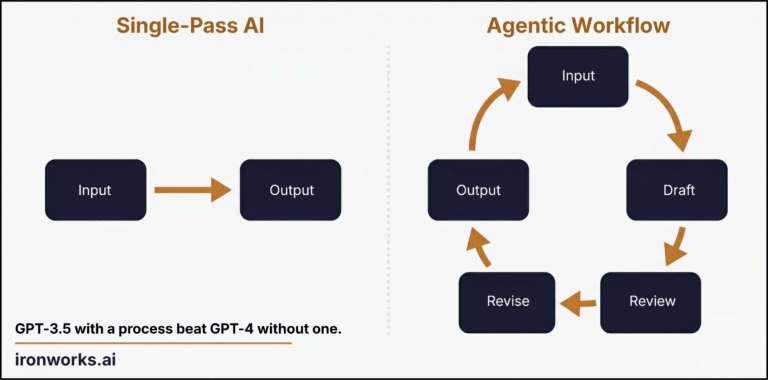

Everyone’s reading the Mollick/BCG study as confirmation that “AI makes workers more productive.” That’s true, but it’s the boring conclusion. It’s the one that sounds good on a keynote stage and doesn’t tell an operator anything they can act on.

The interesting conclusion is about the performance distribution. AI doesn’t just make your team faster. It compresses the gap between your best people and your average people. In a services firm, that gap is the single biggest driver of inconsistency, revision cycles, training costs, and client satisfaction variance.

A 40-person firm doesn’t need everyone to be an A-player. It needs a system where B-players reliably produce B+ work. That’s what this study actually shows is possible — within the frontier, with proper guardrails, on the right tasks.

The $300K in recovered capacity is real math on hypothetical numbers. The revision cycle compression is real. The hiring implications are real. None of it requires a data scientist, an “AI strategy consultant,” or a six-figure implementation budget.

It requires someone who’s willing to spend four weeks testing whether the research applies to their specific workflows. And the intellectual honesty to accept the answer when some of those workflows fall outside the frontier.

That’s it. That’s the whole insight. The study is public. The math is not subtle. What you do about it is the only variable.